SNYCU Ep. 155 - Oct. 21, 2020

In this episode we discuss the September indexing issues, Google's SearchOn event and what we learned, changes to the Quality Raters' Guidelines, and a compilation of the latest SEO news and tips.

Marie’s Podcast for this episode

If you would like to subscribe, you can find the podcasts here:

Apple Podcasts | Spotify | Google Play | Soundcloud

Ask Marie an SEO Question

Have a question that you want to ask Marie? You can ask them on our Q&A with Marie Haynes Consulting page and Marie will answer some of the best questions each week in podcast!

In this episode:

- Algorithm Updates

- The latest on the September indexing issues (including September 21)

- October 13, 2020 - Possible update (but likely not)

- Here’s what we learned from Google's SearchOn event

- What has changed in the updated QRG?

- MHC Announcements

- Need help with a manual action?

- Google Announcements

- Mixed content in Chrome is ‘no longer really a thing’

- Google launches Journalist Studio

- Chrome Dev Summit is scheduled for December 2020

- Google SERP Changes

- Free Shopping tab listings are now launched globally

- SEO Tips

- Reminder: if it is not on your mobile page it will not count for indexing

- Google relies on consistent signals from across the web

- A series of small things a new brand can do to help build trust

- It seems How-to schema has fully rolled out on desktop, plus some interesting findings related to it

- Brush up on your Google Search operators

- How to measure and optimize Largest Contentful Paint (LCP)

- Google Help Hangout Tips

- Google discusses E-A-T and how it relates to health and medical sites

- Interesting comments about frequent changes to your meta robots tags

- Could slower URLs be negatively impacting the rest of your site?

- Other Interesting News

- “Request Indexing” in GSC is not working

- A great summary of what is new regarding the recent ‘new’ Google Analytics announcement

- Possible data anomalies in Google Search Console for Web Stories

- Justice Department set to file long-awaited antitrust suit against Google

- Is there any ‘indexing time’ related to backlinks?

- WordPress owners, say goodbye to Facebook and Instagram support for WP embeds

- Local SEO

- Local SEO - Tips

- How to get a place label or icon in Google Maps

- Local SEO - Other Interesting News

- Asking webmasters to submit examples -- possibly inaccurate wait times showing in restaurant GMBs

- SEO Tools

- New Microsoft Site Explorer shows how Bing sees your website

- Core Web Vitals report from Ryte is arriving soon

- Recommended Reading

- Recommended Reading (Local SEO)

- Jobs

Algorithm Updates

The latest on the September indexing issues (including September 21)

We have been doing our best to determine what happened at Google in mid September to cause so many issues with indexing. As we have been reporting in the last few episodes of this newsletter, Google told us that there were two issues at this time. One involved canonicalization, where they were wrongly canonicalizing some URLs to others. The other involved a problem with mobile indexing.

In last week’s newsletter we reported that Google had restored 99% of the URLs impacted with mobile indexing. At that point, only 55% of the URLs affected by the canonical issue were fixed. Now, Google says that the canonical issue was fixed as of Wednesday, October 14.

Final update: the canonical issue was effectively resolved last Wednesday, with about 99% of the URLs restored. We expect the remaining edge cases will be restored within a week or two.

— Google SearchLiaison (@searchliaison) October 19, 2020

Last week, Marie reported on an interesting case with a client of ours where they appeared to be affected by one of these bugs starting on September 21. We mentioned that we were investigating an interesting finding with which keywords were affected. However, it turns out that this client had started some thin content cleanup at the exact same time. Many pages were either noindexed or removed. As such, we did not go further in analyzing their interesting traffic pattern as it would not be typical of most sites that were affected September 21.

We do have a small handful of clients in our portfolio who appear to have been strongly affected by these indexing issues, and have not seen recovery yet.

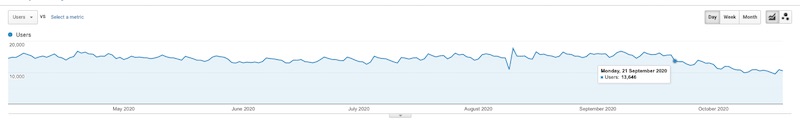

Here is Google organic traffic for one of these clients. You can see that a downward slide started on September 21, and recovery has not happened.

The drop affected different pages to different degrees (which is not typical of what we tend to see with most Google updates related to quality). When we reviewed affected pages, we can see that they are still in the index. But, we can only get them to surface if we search for exact text that is found on that page. In other words, the page is in the index, but is barely ranking for anything. It seems to us that there is some type of filter on these pages suppressing them from ranking for anything significant. It is also worth noting that the pages that are affected are not spammy...they are good pages in our opinion.

We had hoped to spend more time on this ranking issue. However, given that this week we got a new version of the Quality Raters’ Guidelines, and learned some incredibly exciting stuff about Google’s algorithms via the SearchOn event, we did not dedicate as much time to this as we had hoped.

October 13, 2020 - Possible update (but likely not)

We had a good number of sites in our profile see positive changes in Google organic traffic starting on or around October 13, 2020. Given that Google told us that as of October 14, they had resolved 99% of the problems associated with canonicalization affecting indexing, we feel that these changes in traffic are more likely to be related to the indexing changes being resolved rather than an algorithm update.

Here’s what we learned from Google's SearchOn event

This event got us really excited about what Google has planned for upcoming algorithm updates!

You can read Marie’s tweet thread summary of the event for a rundown of everything Google announced:

Here are my notes I took from the SearchOn event:

-Initially BERT was used for 1 out of 10 queries. Now, it is used in almost EVERY English search.

-BERT is "particularly good at understanding the intent behind queries."

-Google detects more than 25 billion spammy pages PER DAY— Marie Haynes (@Marie_Haynes) October 15, 2020

Google announced a new product, Pinpoint, that will help journalists find information across thousands of documents. They were kind of vague in their description, but when they described using AI to find connections amongst vast amounts of data, it sounded very interesting!

Google also announced future improvements to Google Lens whereby a searcher can take a picture of a piece of clothing and then be shown where to buy it, and items to style it with.

We were super excited to hear that you can now hum into your phone and Google will tell you what song you are humming! With that said, either this feature needs work, or we are really bad at humming because it is not too accurate just yet.

Predictions about future Google updates based on the SearchOn event

In our opinion, the most important part of the event was where Google talked about the advancements they have made with BERT. A year ago, BERT was used in one of ten searches. In the SearchOn event, Google told us that almost every single English search makes use of BERT now.

Why is this important? When Google first announced their use of BERT, they said BERT was “particularly useful for understanding the intent behind search queries”.

Google wants to better understand the meaning behind a search.

They also announced that they will be better able to identify passages on a page and whether a particular passage is a relevant result for a search query. In other words, instead of Google’s algorithms saying, “Ah, this page is a good result for this search query!”, they will be able to say, “This section of this page is a good result for this search query.”

We anticipate that this will make good use of headings very important. Also, expert level content will likely be recognized as such and rewarded.

This change is predicted to impact 7% of search results. This is HUGE. To put this in context, the initial Panda rollout impacted 12% of results, while Penguin affected 3% of results.

Last week, we shared about how we will soon identify individual passages of a web page to better understand how relevant a page is to a search. This will be a global change improving 7% of queries:https://t.co/iQoXktmSkt

In this thread, more about how it works…. pic.twitter.com/2oqdoCkt6r

— Google SearchLiaison (@searchliaison) October 20, 2020

We have an article coming out this week with more of our thoughts on this along with ways that you can start improving your content so that it truly is the best option for Google to show searchers.

What has changed in the updated QRG?

We now have a new version of Google’s Quality Rater’s Guidelines. Each time Google updates these guidelines, we pay very close attention!

While there were many changes made with this update, the majority of them are ones that we do not feel give us clues about what it is that Google wants to see in terms of quality. There were many administrative changes including:

- Information to tell the raters that the examples given in the QRG are just “snapshots” and not representative of the current site.

- Information to help the raters know what to do if they encounter malware or pages that don’t load while doing their research.

- Help determining when to rate a page as “Foreign Language”.

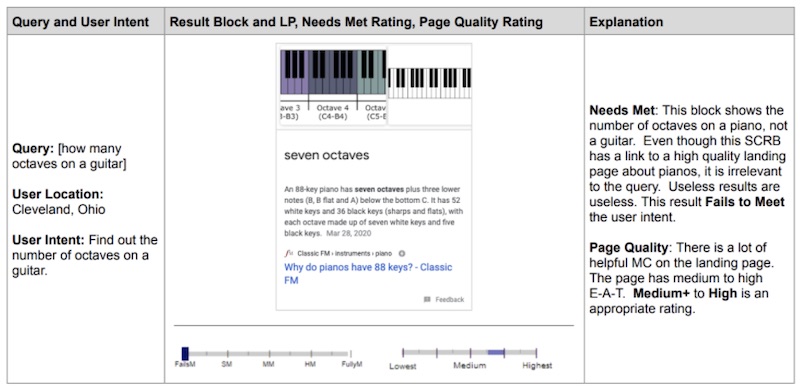

However, there was one big change that we think is extremely important. They added several examples to help the raters determine how to rate a page in terms of “Needs Met”. For each page a rater visits, they are to decide whether the page met the needs of the person who typed in a query.

Here is one of the examples given. You can see that raters are told to rate this page as medium to high in terms of Page Quality, but, “Fails to Meet” when it comes to Needs Met.

Although this seems like a small change, we are excited by it. In the SearchOn event (discussed in another section of this newsletter), we learned about how Google is using BERT to get better at understanding the intent behind a query. We also learned that Google will look at passages on pages now rather than the entire page itself to determine if it is a good fit.

If the Quality Raters are being given more instruction on how to determine whether content is meeting a searcher’s needs, and Google’s algorithms are getting better at recognizing intent and relevancy, we anticipate that with the next core update we will see nice improvements in traffic for websites that can produce content that truly is the best and most helpful of its kind.

Stay tuned for an article on this subject which we hope to have live very soon.

MHC Announcements

Need help with a manual action?

We recently published our updated version of our guide to unnatural links penalties. The book will help you understand what a manual action is, how to have one removed, and a thorough guide to unnatural links. Be one of the first to purchase a copy here.

We updated our Complete Guide to Unnatural Links Recovery for 2020, a book to help you understand and come back from an unnatural links penalty. @Marie_Haynes and team have successfully removed hundreds of unnatural links penalties.

Purchase it here: https://t.co/rtrHuvMmF4

— Marie Haynes Consulting 🐼 (@mhc_inc) October 20, 2020

Google Announcements

Mixed content in Chrome is ‘no longer really a thing’

Emily Stark, a security engineer with Google Chrome, tweeted that Chrome is now handling mixed content on a page as either an upgrade to HTTPS or downright blocked. She discussed how a good portion of mixed content came down to “accidental developer error”, and by automatically updating HTTP subresources on a page to HTTPS (say for example, an image -- which is typically also available on HTTPS ~50% of the time), they can generally rectify many issues. In instances where the resources won’t load over HTTPs, Chrome will choose to block them by default.

1/ After a very long gradual rollout, thanks to @carlosjoan91's efforts, mixed content is no longer really a thing in Chrome: any http:// subresources on https:// pages will be either upgraded to https:// or blocked. (https://t.co/8dUeTgbTXA)

— Emily Stark (@estark37) October 14, 2020

Google launches Journalist Studio

This is an awesome tool for journalists to work more effectively and it includes a series of useful tools. Our favourite tool has to be the fact checker explorer which’ll allow you to search fact results from the web related to an entity. At times we use this tool as a quick way to show clients that their YMYL content may not align with scientific/general consensus.

Google launches Journalist Studio:

"Tools to empower journalists to do their work more efficiently, creatively, and securely": https://t.co/M970w8aGzz

Including:

* A fact checker explorer: https://t.co/i6bE7Up2xl

* Public Data explorer: https://t.co/UsCtMg61Mq

...

— Aleyda Solis 🕊️ (@aleyda) October 15, 2020

Chrome Dev Summit is scheduled for December 2020

The eighth annual instalment of Chrome’s Dev Summit promises developers an opportunity to learn about the latest tool and updates coming to the Google Chrome browser. On the site’s FAQ page, it breaks this down to “...Platform updates, architectural guidance, developer tooling, case studies, and opportunities for increased reach and engagement on the web.”

This year’s two-day virtual event (held December 9-10) will have publicly available sessions, however, those of you looking to partake in the workshops, office hours, or join the live Q&A, we would encourage you to request an invite.

🗣 Word on the street is that #ChromeDevSummit is back!

Be sure to save the date and request an invite. You won't want to miss out ⬇️ https://t.co/ku9uBaeZWq

— Google Search Central (@googlesearchc) October 14, 2020

Google SERP Changes

Free Shopping tab listings are now launched globally

Rolling out as a part of the SaG release, Brodie has noticed free shopping tabs now available. It’s unclear when this will be available globally, but we are seeing the start.

Some great SEO news to end the week: free Shopping tab listings are now available globally 💥

Seeing them appear across various locations, along with the SaG reporting tab in Merchant Center (important)

Learn about GA tracking, use of reviews & more: https://t.co/d1vYZaJDRW

— Brodie Clark (@brodieseo) October 16, 2020

SEO Tips

Reminder: if it is not on your mobile page it will not count for indexing

There was a tweet this week that gathered a lot of attention, saying (paraphrased), ”John Mueller said that content that is only on desktop and is not seen on mobile will not be indexed by Google”. However, we agree with Barry Schwartz when he says that this isn’t new. Google has said multiple times over that content on your site should be 1-to-1 on mobile vs. desktop, and this includes things like schema, links, and more.

John later joked that Google should rename mobile-first indexing to mobile-only indexing. Remember, Google isn’t indexing both versions of the site -- if you’re part of the 70% of sites that have been moved to mobile-first indexing, this is the sole version getting indexed.

If you’re still stumped about MFI, our guide has you covered! There are many interesting tidbits including what happens when your mobile version of your site has content that isn’t available on the desktop version of your site (hint: it’s not overly common but it can be a good thing!).

Google relies on consistent signals from across the web

While this may have been a somewhat sarcastic tweet from Gary, it is a healthy reminder that Google looks at signals from all across the web for what users are expecting from the search results.

business: "we changed our name to 'Bar' and going forward you shall call us 'Bar'"

literally everyone: "no, we're gonna call you 'Foo' for a few months more cos we're humans

[a week later]

b: "why is Google still calling us 'Foo'?!"

Google: literally everyone's calling you that pic.twitter.com/gb2DEsKjMZ— Gary 鯨理/경리 Illyes (so official, trust me) (@methode) October 14, 2020

We often see businesses online complaining about things being incorrect in the SERPs (such as their founded date, for example). Unfortunately for them, Google is picking up signals which validate the year they’re displaying in say, a Knowledge Panel or a featured snippet. In this case, it’s up to the site owner to work harder to generate plenty of useful signals, and also ensure that the messaging is consistent.

A series of small things a new brand can do to help build trust

Carrie Rose has created a list of tasks that will help a brand build trust. Here are a few we really like as they’re great for building trust with customers and Google:

- Build out your about page and make it detailed - detail in your about page is super important to send Google trust signals and share who and what your business stands for.

- Have great reviews on site - Google looks at review signals, and so do customers. When was the last time you bought something you were unsure of without looking at the reviews?

- Encourage reviews after purchases - You need to get reviews somehow, so once someone makes a purchase, this is a great way to obtain one.

Check out the thread for more!

New start ups using paid social to build brand and drive traffic - one of their biggest problems is being trusted.

The ads may be driving the traffic but little sales because your customers are questioning it’s legitimacy

To improve looking like a real business: (1)

— Carrie Rose (@CarrieRosePR) October 15, 2020

It seems How-to schema has fully rolled out on desktop, plus some interesting findings related to it

From Brodie's testing of How-to schema he found that only page one on desktop shows this markup, and only the first three eligible sites (ie. the sites using how-to schema) will show it (unless one of those three is the top result).

Position one on desktop won't show how-to schema, and if it has the proper markup, it is subtracted from the three results showing how-to schema, meaning there will only be two results that show how-to schema (this is all specific to desktop results).

Well, that makes things interesting. How-To Schema rich results on desktop operate differently to all other rich results on Google. They don't appear in position 🥇 https://t.co/hrRUqYpRr6

— Brodie Clark (@brodieseo) October 19, 2020

Brush up on your Google Search operators

A search operator is composed of characters used in search that focus a query. Google uses NLP and machine learning to try and understand what you are looking for with each search and will display what they think the user intended. However, if you can learn how to optimize these, this is great for content marketing and focusing your campaigns.

There’s also a nifty section (25 in total) for link building search operators!

Check out @internetkarlie's tips on how Google search operators can level-up your brainstorming, content creation and link building campaigns.

Search operators can also help:

– Pitch high-end news

– Link reclamation

– Find internal linksBlog post here: https://t.co/QB7j6wBKcn pic.twitter.com/kQd6djIRCc

— Siege Media (@siegemedia) October 12, 2020

How to measure and optimize Largest Contentful Paint (LCP)

This is a really helpful guide from Aymen Loukil regarding how to assess one of the new Core Web Vital metrics, Largest Contentful Paint (LCP).

New site speed guide ⚡️

- What is Largest Contentful Paint (LCP)?

- How to audit it?

- Tips for improving ithttps://t.co/cDAMsdiHkL— Aymen Loukil (@LoukilAymen) October 16, 2020

As we have covered a number of times in the newsletter, the LCP metric looks to reflect the loading performance of a web page by measuring how long it takes from the biggest element of a web page to load.

Aymen goes into detail on the different tools available to measure LCP and provides some advice on how to assess which parts of different pages are likely to be considered as the largest element. For a homepage, it will likely be a slider image whereas for a blog post it could be the h1, hero image, or the first large block of text.

Aymen provides some good advice for improving upon these scores such as reducing the transfer size of critical elements and preloading images.

Google Help Hangout Tips

Google discusses E-A-T and how it relates to health and medical sites

In a recent help hangout, John said that Google can be a bit more critical on sites that are medical in terms of the information they find there. John recommended looking at what those in the SEO community have said about E-A-T and follow their advice. Marie has a great article on everything encompassing E-A-T as well as a guide to scientific consensus that are both great places to start.

More from @johnmu: Look at what SEOs are explaining about E-A-T. Understand how to best present your content, author profiles, & more. John can't guarantee that will improve rankings, but make sure you have all of those signals for users. Google can pick those up too: https://t.co/IPg9MI5r08

— Glenn Gabe (@glenngabe) October 16, 2020

Marie went further and created a thread on everything from the recent hangout that discusses E-A-T. Some of the main takeaways: show the searcher that you can be trusted by having the signals that indicate this. This means taking action. Don’t hope the rankings will change, Google needs to know that you are worth ranking.

Interesting thoughts on medical EAT in this help hangout.

This question was about a psychology site that loses out consistently on rankings for alternative psychological treatments. Big authorities always rank there. https://t.co/T81vyA501i

(1/5) pic.twitter.com/7tGYj7HxlT— Marie Haynes (@Marie_Haynes) October 20, 2020

Interesting comments about frequent changes to your meta robots tags

John says to try not to change the meta robots tags (index/noindex and back) too often because frequent fluctuations can throw Google off. If a page is noindexed for a longer period of time, Google will begin to crawl them less frequently.

In this particular case (the question posed in the hangout), a webmaster was often fluctuating between index/noindex and not seeing the intended URL appear in Search quickly once they opted for indexing it. The advice? Maintain consistency -- if you really want pages to be indexed, keep them indexed.

Changing the meta robots tag frequently (indexed to noindexed & back)? Via @johnmu: In general, the fluctuations b/t indexed & noindexed can throw off Google. G might crawl less frequently after a url is noindexed for a while. Stability is optimal: https://t.co/jAWTEKbSS5 pic.twitter.com/4o9Z0gWPAm

— Glenn Gabe (@glenngabe) October 19, 2020

Could slower URLs be negatively impacting the rest of your site?

Google tries to be as granular as possible and recognize the individual parts of your site. However when it comes to speed it depends on how much data Google has. Google uses Core Web Virals and Chrome User Experience report data to make decisions on your site. If you have subdomains or subfolders and that is slow while the rest of your site is fast, then Google will treat that section of your site as slow and won’t impact the rest of your site.

Other Interesting News

“Request Indexing” in GSC is not working

We have disabled the "Request Indexing" feature of the URL Inspection Tool, in order to make some infrastructure changes. We expect it will return in the coming weeks. We continue to find & index content through our regular methods, as covered here: https://t.co/rMFVaLht6V

— Google Search Central (@googlesearchc) October 14, 2020

As of the morning of October 21, we still are not able to request indexing via GSC. We are not sure whether this is related to the indexing problems Google has suffered in the last month. Hopefully our ability to request indexing returns soon.

John Mueller did follow up to clarify that this does not affect regular crawling or indexing of pages.

A great summary of what is new regarding the recent ‘new’ Google Analytics announcement

Last week, Google announced that App+Web was being rebranded as Google Analytics 4, which will now be the main property type in Google Analytics. We talked about this briefly last week’s edition of our newsletter, but at the time of publication, we mainly had just Google’s own announcement of this. We found this helpful post from Krista Seiden containing more detail on what new features we can look forward to utilizing in GA4.

Google had been rolling out some of the new features prior to the announcement for GA4’s rebrand, including e-commerce reporting, and the addition of a native connector to GA4 in Data Studio. You can read more about these features in Krista’s article, if they are relevant for you. Also included in Krista’s article is a clear outline of the new features coming as well as why they’re going to be useful. She’s created a helpful companion article which does a walkthrough of GA4’s new navigation and reporting, and we think this is really helpful for people who use Analytics but wouldn’t consider themselves at an expert-level.

Possible data anomalies in Google Search Console for Web Stories

Google has noted certain data anomalies with Web stories found in Search Console. Barry covered this over at SERoundtable.

Since October 6, you may have seen a spike in web story stats from Discover. Between the dates of September 25-28, 2020, you may have noticed a drop in clicks and impressions in Discover because web stories were shown less often at this time.

Justice Department set to file long-awaited antitrust suit against Google

This lawsuit was filed with claims that Google is responsible for anticompetitive conduct that monopolizes their own entities related to Search. This has long been speculated by others as Google has had various methods of ensuring that their products stand above any others within their platforms. “There is little opportunity for competitors to make inroads”, according to the government. Google has stated that their competitive edge simply comes from the desire users have to use their products. This is a very lengthy article, but if you’re interested in more detail we recommend reading it in full.

This Verge article has indicated that these charges were filed October 20th. It will be very interesting to see what comes of this lawsuit.

Is there any ‘indexing time’ related to backlinks?

John recently said that there is no set time for Google to crawl and index your backlinks. Depending on many factors it could take minutes to even months.

https://twitter.com/JohnMu/status/1317432534716829696?s=20

WordPress owners, say goodbye to Facebook and Instagram support for WP embeds

Facebook and Instagram are dropping support for unauthenticated embedded content on WordPress sites starting in a few days (Saturday, October 24th to be exact). What this means is that if you have ever embedded content from Facebook or Instagram onto your WordPress website in the past, you should be checking to see if the content still displays.

Thankfully, there is a new plugin called oEmbed Plus, developed by Ayesh Karunaratne that can help fix this problem. You still need to have a Facebook developer account, however, in order for this to work for you. According to SEJ, another option that will help fix this, that does not require you to create a Facebook developer account is called Smash Balloon.

This is retroactive, so this has the potential to break a lot of embeds (reminder: broken embeds are bad UX!). This article from SEJ has more about what’s changing and why, as well as what publishers can do to prepare for it.

Local SEO

The last week has been quite steady for local flux. Everything is relatively low, meaning there were no significant algorithmic changes in the local sphere. Each week we’ll reference these numbers to keep you posted on any rumbling of algorithm updates that could affect you.

Local SEO - Tips

How to get a place label or icon in Google Maps

Wondering why your business doesn't have a place label or icon on Google Maps? How does Google decide who qualifies? This study looks at 12 different factors with help from @DanLeibson and @PlacesScout https://t.co/YgviWh8VAM

— Joy Hawkins (@JoyanneHawkins) October 20, 2020

Sterling Sky reveals some key qualifying factors that may help enable or better yet, help in understanding your eligibility for a ‘Places’ label on Google Maps. Not every local business can possess a place label on Google Maps because it just wouldn’t be visually favorable or very user-oriented.

Based on their own study, here are some of their findings:

- Place labels that appeared without zooming had an average of 6,455 reviews.

- The older the listing, the more likely it was to have a place label.

- GMB listings that included a website were more likely to have a place label.

- Search volume is likely a very large determining factor.

- Your primary business category seemed to be a determining factor. Schools, emergency services, entertainment, for example, weren’t lacking in place labels. Small offices like law or dentists seemed to be less likely to have a place label.

Local SEO - Other Interesting News

Asking webmasters to submit examples -- possibly inaccurate wait times showing in restaurant GMBs

Do me a favor. Google your favorite restaurant and check the wait times displayed in the Knowledge Panel. Add your examples to this thread: https://t.co/4uUoRiEHPi I can't find a single one that is accurate but yet Google is saying it's fine. pic.twitter.com/4QUmGejFnW

— Joy Hawkins (@JoyanneHawkins) October 15, 2020

There is a string of evidence suggesting the wait times displayed in your local restaurants’ GMB listing is completely inaccurate. Joy Hawkins revealed in her help forum that these wait times are automatically generated through anonymized historical data. This issue has been brought forward to Google.

SEO Tools

New Microsoft Site Explorer shows how Bing sees your website

The new Microsoft Site Explorer examines your URL profile to give a clearer picture of your site structure in order to make improvements. It is broken down into excluded, indexed, warning and error URL categories. You can sort your own URL folders in the way that best suits you, and even use this as a URL inspection tool. It seems like a really well organized new feature!

Core Web Vitals report from Ryte is arriving soon

Ryte is coming out with a new feature that will report on your site’s Core Web Vitals. Eventually, it will be integrated with their tool’s Performance Report But as of now, it looks like this is still in limited availability, as the page says you need to contact Ryte’s sales team to get access to it. It’s great seeing SEO tools making optimizing for your Core Web Vitals easier to understand and visualize!

I've been playing around with this report and I fully love it ⚡️⚡️⚡️

Announcing our new Web Vitals report, coming very soon to our Performance reports! https://t.co/C7Rq5Sbj8H pic.twitter.com/YKVyDYCG7Y

— Izzi Smith (@izzionfire) October 16, 2020

Recommended Reading

How to Maximize your Pages’ CTR in Search Results Besides Improving your Rankings – Aleyda Solis

https://www.aleydasolis.com/en/search-engine-optimization/improving-pages-ctr-search-results/

October 16, 2020

In her article, Aleyda suggests a few alternatives - that do not involve better ranking - to get a better click-through-rates. Here are our two favourites:

1. If the page with low CTR is the well ranked page for your meaningful queries, there are a few things that could be causing the low traffic. Aleyda lists the following:

- Ads: If there is a high number of ads on that query’s SERP, it can potentially take away traffic from your page, as CTR would be diverted.

- Search intent is satisfied on page: If the query satisfies user intent on page (Featured Snippet, Knowledge Panels, etc.) it can result in non-clicks.

2. Other things you can do to optimize and improve the CTR:

- Make sure that your page titles and meta descriptions are fitting for your meaningful query: A good way to optimize this is comparing your page to your competitors and try to spot keywords and titles that could work better for you.

- Featured Snippets/Rich Results: As we mentioned above, these on-page results can take away some CTR, however, if your page is the one showing a Rich Result/Featured Snippet on the SERPs, you could leverage some extra CTR.

Image Packs in Google Web Search – A reason you might be seeing high rankings but insanely low click-through rate in GSC – Glenn Gabe

https://www.gsqi.com/marketing-blog/image-pack-rankings-in-google-web-search/

October 14, 2020

Glenn Gabe explains exactly why you may be seeing great impressions but little actual traffic. Image packs, which contain a series of images could be the reason for the skewed results. After clicking an image pack result, you aren’t taken to the website, rather to Google images, with that image highlighted. The image showing on the initial search should only link to a Google images URL, but since the actual image alt attribute is listed, it registers as an impression. Check out Glenn’s post for clarifying screenshots.

Another interesting finding is this same situation occurs for knowledge panels that feature images as well. This is yet another reason why only looking at aggregate data can be quite misleading. If you’re ever wondering why you can see great rankings in Search Console but extremely low CTR, this may be the reason.

YouTube Dominates Google Video in 2020 – Dr. Peter J. Meyers

https://moz.com/blog/youtube-dominates-google-video-results-in-2020

October 14, 2020

This Moz study was able to confirm how much real estate YouTube takes on Google Videos. By analyzing the first three video slots on SERPs, Moz found that YouTube had a consistent 94% presence throughout the SERPs, with Facebook and Khan Academy going between 1.9% and 1.0%. Another interesting discovery was related to “How to” SERPs, where YouTube videos spiked up to a 97-98% presence.

By having such a massive cut of Google Videos, YouTube’s dominance raises the question: Is this fair and/or is Google favoring their own platform?

Moz’s Dr. Pete says no. He suggests that it is unlikely that Google’s algorithm has a preference for YouTube videos, and that the issue could just simply be YouTube’s dominance over video hosting platforms.

As it can be easier to get a good result in Google SERPs by creating a video on YouTube for that query, a lot of companies have created their own YouTube channel to try and own that share of the market, thus creating more relevant YouTube videos for Google Videos.

How Pinterest runs Traffic-based Interlinking experiments for SEO – Pinterest Engineering

https://medium.com/pinterest-engineering/how-pinterest-runs-traffic-based-interlinking-experiments-for-seo-9cb2cbdba6f8

October 8, 2020

Pinterest’s “growth search traffic team” recently shared the details of the A/B tests they ran in order to verify their hypotheses about the benefits of a new internal-linking algorithm. First, they needed to prove that a page with an internal link will net more search traffic than an equivalent page without an internal link. Next, they needed to verify that in order to remove a link to one page and link another instead, the traffic gains of linking to the new page outweigh the traffic loss of not linking to the old page. Third, having assigned priority scores to destination pages, they needed to prove that linking to the higher-score pages would lead to more gains than linking to the lower-score pages. Once these three hypotheses were validated, they were able to proceed with the new interlinking algorithm. Very cool!

How to Succeed in Enterprise SEO – Patrick Stox

https://ahrefs.com/blog/enterprise-seo/

October 8, 2020

The challenges of enterprise SEO are manifold: training and knowledge sharing, organization, achieving buy-in, securing and effectively marshalling the required resources, data collection and reporting. Patrick Stox at Ahrefs provides great high-level advice here on how to handle these challenges, whether you’re working in-house or as part of an agency. Patrick also provides a thorough list of project ideas — from content creation and optimization, to internal/external link building, to various technical SEO tasks (broken link reclamation, redirect correction, sitemaps, schema). While enterprise SEO can be a grind and presents unique challenges, it can also be highly rewarding if done well. As Patrick writes, “For the most part, if you get the basics right at the enterprise level, you’ll be doing better than most. Boring = $$$ when it comes to enterprise SEO.”

Recommended Reading (Local SEO)

What’s behind the badge that powers Google’s local trust layer? – Justin Sanger

https://searchengineland.com/whats-behind-the-badge-that-powers-googles-local-trust-layer-341987

October 13, 2020

Google has unveiled a new designation for Places in Google -- trusted and untrusted. The difference? A Google guaranteed badge. It’s been touted as the new era of Google My Business and will see GMB shift to a world where monetization plays an important role. With this change, it now means that Local Services Ads (LSAs) are not the only players that can carry the ‘Google guaranteed’ designation.

You’re probably well aware by now that Google recently introduced an option to upgrade your GMB profile for $50/month. There’s been plenty of speculation about how this’ll all play out longterm and this news comes as evidence/intentions of Google’s new local strategy. As we mentioned in previous newsletters, the fee gives you a profile badge, along with several other additions including call recordings, Google support, and more.

GMB has historically been free and for now, it’ll continue to do so. Many SEOs have speculated that monetization of GMB would someday come, and now it seems that if you want to stand out from the pack, you’ll need to contribute to Google’s revenue growth.

For any of you who might be curious, the current sign-up process has been said to be time-consuming and a little frustrating. It also requires plenty of examination to gauge your staff and business qualifications which you should be aware of ahead of time. It goes without saying that this may be a helpful ranking factor (most likely indirect) and should prove to be a worthwhile investment when it comes to earning customers.

Jobs

JOB OPPORTUNITY X2 🚨@riseatseven are hiring for 2 positions

- Technical SEO Strategist

- Analytics & CRO StrategistGreat chance to work with @dergal so you don’t want to miss out!

Apply now using the email within the job ad!

£25-£40k Salary 🗣

RT, Share, Like, APPLY pic.twitter.com/R6w6hVoqMF

— B-DigitalUK (@BDigital_UK) October 13, 2020

Chrome Security is looking for a Technical Program Manager! Work with an amazing team, solving difficult problems, and help keep Chrome and the Web safe for everyone. 🕸️🔒🪲👩💻 https://t.co/wS6KGxYFQk

— Andrew Whalley (@arw) October 19, 2020