A new Google research paper called, Refreshing Large Language Models with Search Engine Augmentation shows how the accuracy of LLM chatbots like ChatGPT or Bard can be improved by injecting fresh information retrieved from a Google search into prompts.

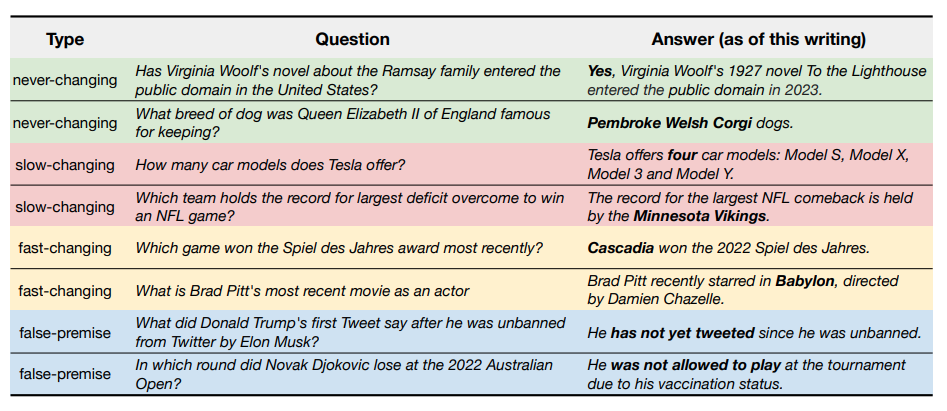

They used something called “FRESHPROMPT” to improve a language model’s answer by augmenting its prompt with information from a Google Search. This helps in cases where fresh information is needed to answer the question properly. The other issue they addressed, were “false premises” - questions where the question is wrong and is essentially asking the LLM to make up information.

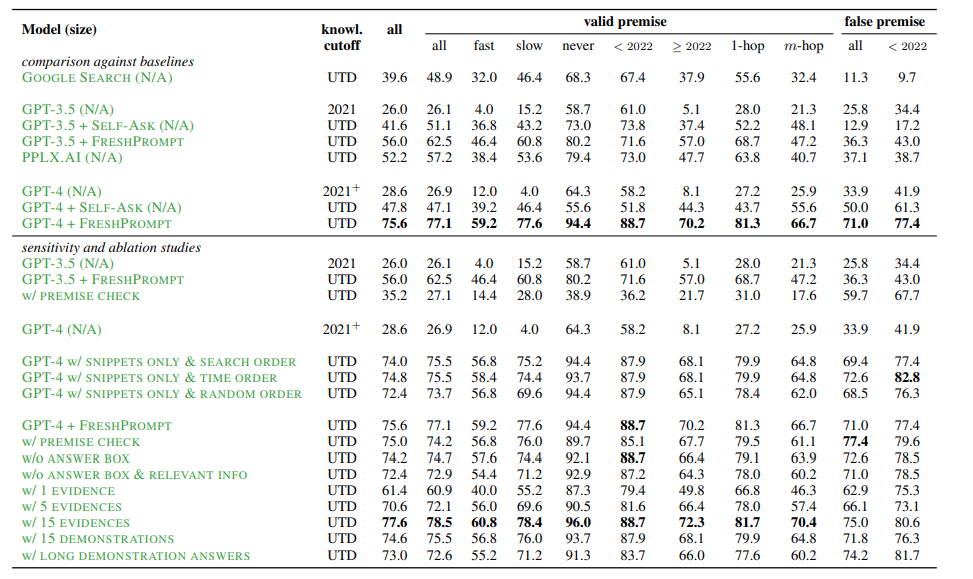

They got a group of Natural Language Processing researchers together and asked them to come up with questions that would likely be tricky for LLM’s to answer. Then, they tested several LLMs with these questions and then once again with the addition of FRESHPROMPT. They did over 50K human evaluations on these answers to decide whether they were accurate.

The tests found that an LLM prompt with FRESHPROMPT augmentation greatly improved accuracy in answering these questions that were designed to be tricky to answer. GPT-4 plus FRESHPROMPT appears to have greatly outperformed Google Search itself. Google's accuracy here was determined by the accuracy of the first result returned in the number one position. Note, this does not mean that all Google Search is inaccurate. These were questions specifically designed to be tricky to answer.

Thoughts from Marie

I can see a world where the Google Search Box is a thing of the past, and instead, most people find the information they are looking for by starting with their AI assistant, Bard.

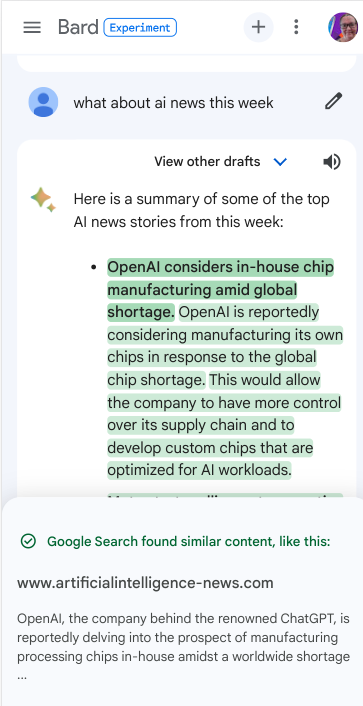

If you’ve used Bard and concluded that it could never be used for search due to its inaccuracies, you’re right. In its current state Bard tends to hallucinate. It’s useful for many things, but not trustworthy enough for us to rely on it for anything important.

I’ve felt Bard’s accuracy has improved significantly since its first launch. It has continued to get more and more useful to me, especially when they added extensions to connect it to Gmail, Google Maps, Lens and more. I’m finding myself turning to Bard over ChatGPT for many things now. The feature that lets you double-check responses with Google makes it easier to trust the responses.

Related: Which LLMs do I use the most?

To me this paper shows that it is worth considering the possibility that one day, the vast majority of “searches” on Google actually start via a conversation with an LLM such as Bard.

This could have huge implications for the SEO industry. Search behaviours would change dramatically. If Bard is good at giving answers, websites might be clicked on less, unless they have information that is helpful enough for users to seek it out beyond an AI generated answer.

It's also possible that the way people shop and find businesses online will change significantly as well. Today we might search for "garden center near me". The search of tomorrow would start with a conversation with Bard about why our veggies aren't thriving, ideas on how to improve the garden, and, when appropriate, recommendations of local places to go to buy supplies.

Google moving away from being the traditional search box

In the year 2000, Larry Page said, “We have some people at Google who are really trying to build artificial intelligence…To do a perfect job of search you could ask any query and it would give you the perfect answer, and that would be artificial intelligence.”

In 2022, Google told us we’ll eventually stop thinking of Google as Google Search Box, but rather Google is evolving to connect people with information in ways that go beyond what we traditionally might think of as search.

In last week’s Pixel 8 unveiling, Google announced Assistant with Bard, saying, “This conversation overlay is a completely new way to interact with your phone.”

Google says, “You can interact with it through text, voice or images — and it can even help take actions for you. In the coming months, you’ll be able to access it on Android and iOS mobile devices.”

The New York Times reported earlier this year that Google was working on Project Magi, a whole new search engine, one that would offer users a far more personalized experience.

It is not hard to imagine that Google is moving more and more towards becoming an AI assistant more than it is a search engine.

What can we do to prepare?

We need to be paying close attention to what happens as more and more people use Bard. I would encourage you to spend time each day using Bard to learn more about how it works and especially, how and when it recommends websites. For each question I ask Bard I use the Double Check with Google feature at the bottom and look at what types of websites it is using to back up its responses. I often find myself visiting these websites to get more information. I think it’s possible that being shown as a website that backs up Bard’s answer will be very important!

Here is one final interesting thing to note from this paper. When they called on Google Search to augment a prompt, there were a few things they said they used:

“We retrieve all of the search results, including the answer box, organic results, and other useful information, such as the knowledge graph, questions and answers from crowdsourced QA platforms, and related questions that search users also ask.”

I found it very interesting that part of the information that is used in FRESHPROMPT is “answers from crowdsourced QA platforms,” especially considering how the latest helpful content update and the August Core update elevated sites like Reddit and Quora in the search results.

I think it’s possible that the helpful content system is preparing Google’s search results for a world where instead of searching, we converse with our AI assistant, who will in turn, give us the most helpful answer, which sometimes will also include websites. Our goal, as always should be to produce content that our audience will find helpful.

The word "helpful" was all over Google's announcements this week.

This blog post originally started out as a newsletter entry. Newsletter will be out tomorrow. You can sign up here if you're not already subscribed:

Comments are closed.