MHC is closing down our disavow blacklist tool

A lot has changed since Google first gave us the disavow links tool in 2012. Back then, we found that the more unnatural links we could find and disavow, the better our chances of seeing improvement. Creating the blacklist saved us many hours of link auditing time as we were able to easily find and disavow links from domains that we knew to be spammy.

However, things have changed over the years.

We still do recommend disavow work for some websites. But after years of disavowing links for many sites we feel that most do not need to disavow. For those sites that do benefit from disavow work, the types of links we need to disavow are ones that are not on our disavow blacklist, and as John Mueller has said, are ones that a tool is unlikely to identify.

https://twitter.com/JohnMu/status/1074693069616898049?s=20

In this article, we’ll explain

- Why we created the disavow blacklist and how it helped many business owners

- How disavowing changed in 2016

- How to determine whether you should be disavowing links in 2022 and beyond

- Whether there is value in using tools such as Semrush’s toxic links tool to determine which links to disavow

- Why we no longer recommend use of our blacklist

Why did we create the disavow blacklist?

In 2012 and 2013, the majority of the work I did was in helping websites remove unnatural links penalties bestowed by Google. My process, which is laid out in our book on how to remove manual actions was to find as many links as we could pointing to a site, and then manually review them to decide which ones would be considered unnatural based on Google’s documentation on link schemes. It took ages to review links one at a time, but I have found that this is by far the best way to be accurate in deciding which links to keep and which to disavow.

In the early days of doing this work, we saw many sites improve after disavowing.

Prior to the release of Penguin 4.0 in 2016, we saved ourselves hours of link auditing by running a site’s link profile through our disavow blacklist by easily finding links from low quality directories and other sites that only ever tend to out link out with spammy links.

With the launch of Penguin 4.0, Google told us that they now felt confident that their algorithms could simply ignore spammy, low quality links. As John Mueller said, “For the most part when we can recognize that something is problematic and any kind of a spammy link, we will try to ignore it.” He also said that in some cases if there was not much left after all of the ignoring was done, then Google’s algorithms may distrust the site — “We’ll work to ignore the irrelevant effects, but if it’s hard to find anything useful that’s remaining, our algorithms can end up being skeptical with the site overall.”

How our philosophy on disavowing changed in 2016

Our philosophy since Penguin 4.0 has been to do the following when sites contact us concerned about the quality of their inbound link profile:

- Determine whether the site has a significant number of inbound links that represent legitimate attempts to manipulate PageRank. (Examples include paid links and widescale article marketing and other links that go against Google’s documentation on link schemes.)

- If so, we manually review one link from each linking domain and make a decision on disavowing those links.

- While we are doing this, we also disavow the low quality links that we find using our disavow blacklist.

While there is no harm in disavowing low quality spammy links, it likely does not help improve rankings. We believe that Google’s algorithms are already ignoring these links. It has been a long time since I can recall seeing improvement we attributed to disavowing solely the types of links that are in our blacklist. When we do see improvements these days after disavowing, it is always in sites where we have disavowed links that were purposely made for SEO and very little else.

What is an unnatural link vs. a spammy link?

Every site gathers links from low quality sites. This does not mean that they are building links that contravene Google’s guidelines regarding linking. These guidelines tell us that an unnatural link is one that is “intended to manipulate PageRank or a site’s ranking in Google search results.

Spammy links

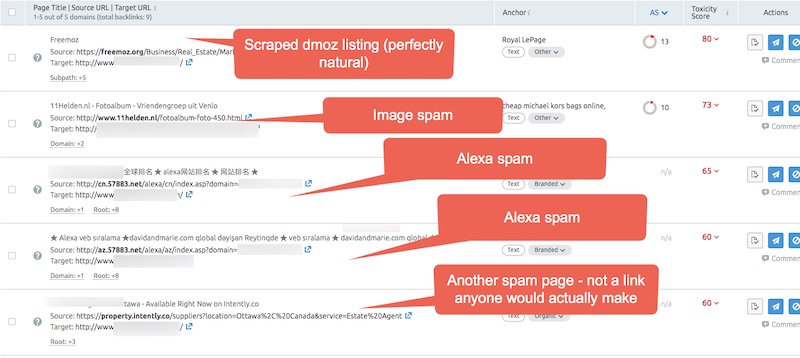

The following are all examples of links pointing to mariehaynes.com which we would consider spammy. Google is likely already ignoring these. These were not created to manipulate our PageRank and rankings. They are not causing a penalty or demotion in any way. Disavowing these will not cause us to see any improvements.

Pages that scrape the content of authoritative sites and link back to you. If I get an article published on Moz, I’ll soon get hundreds of links like this from scraper sites.

Random spammy looking sites that make no sense

Sites with domain or keyword stats

There are many other types of links that we would consider the type of spammy link that Google’s algorithms likely just ignore including comment spam and links on spammy wallpaper image pages.

While there is no harm in disavowing these links other than the time spent analyzing them, there is likely no benefit either.

Unnatural links

Unnatural links are links that were created to try and game Google’s algorithms. Paid links are the obvious example. Buying links in articles is another. Creating large amounts of content to be published on other sites across the web so that you can get a link back is against Google’s guidelines as well.

When this discussion comes up, there is always someone who says, “Yeah, but how would Google know whether I bought this link or a competitor who is trying to do negative SEO did?”

In our experience, which amounts to ten years of actively working with sites to remove manual actions, we have yet to see a site that was given an unnatural links manual action solely because someone else was building links to the site. Every case that we have worked with to remove an unnatural links manual action is one that had actively been creating their own links to manipulate Google.

But could Google get it wrong algorithmically? Could their algorithms possibly demote a site unfairly because it has a very large volume of spammy links pointing to it?

Google’s John Mueller said that “where there is a clear pattern of spammy and manipulative links by [a] site, Penguin may decide to simply distrust the whole site.” He says that Google’s algorithms may lose trust in your site if, “our systems recognize that they can’t isolate and ignore these links across a website.”

If Google’s algorithms detect that there are links pointing to your site that represent attempts at manipulation, but they can’t easily determine which of those links are earned mentions and which are manufactured by you, then you may have a problem. Disavowing the links that were made for SEO purposes and keeping those that were naturally earned, can sometimes result in improvements.

But disavowing spam links, the types of links that we point out in our disavow blacklist, is not helpful here. We believe Google is easily able to isolate and ignore that type of link. We have not seen evidence to the contrary despite looking for it. Trust us, we would love to be able to recommend and do more disavow work!

Should you disavow links from toxic backlink reports from tools like Semrush?

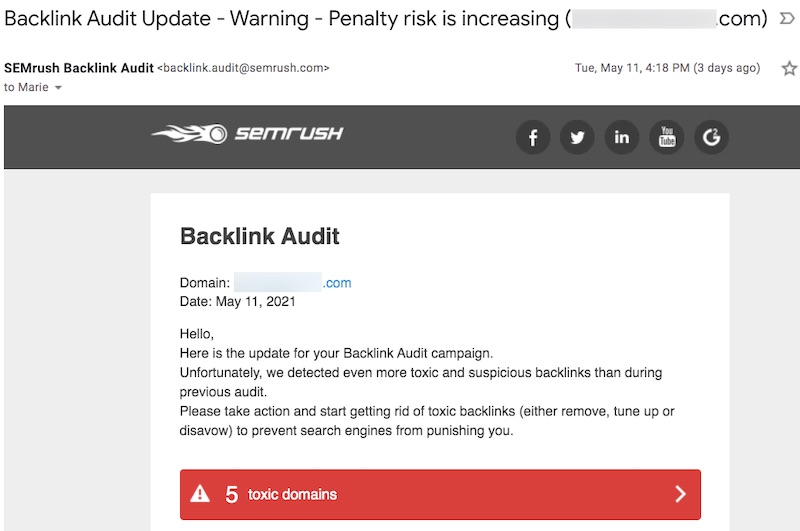

I love Semrush. At MHC, we use Semrush daily to assist with technical audits, competitor research, keyword research, and more. But we are not fans of their toxic links report. This is not a surprise to Semrush as I’ve had several conversations with them to discuss this. Semrush is not the only tool to provide a toxic links report, but it is the one that we get asked about the most.

Semrush says that with their tool you can “audit your backlinks, analyze the toxic signals, send emails to site owners and remove harmful links that may cause a penalty from search engines.”

This is inaccurate for several reasons:

1) The links Semrush has marked as toxic are almost completely spam links we believe Google is ignoring.

2) Going through the work of emailing site owners to get links removed is something that is only recommended if you are removing a manual action for unnatural links.

3) There is no evidence that this type of “toxic” link (not a phrase Google uses) could cause a penalty from search engines.

If you sign up for Semrush’s link report, you may get an email like this – “Warning – Penalty risk is increasing”. I received this email for one of my sites.

Every one of the links marked as “toxic” were ones that I would have marked as spam and almost certainly being ignored by Google.

While there is probably no harm in disavowing these links, you are not likely to see any improvement as a result. Disavowing is meant for sites trying to remove a manual action and for those who have been actively building links for the purpose of improving rankings.

Conclusions

We’re shutting down our disavow blacklist because we do not feel it is helpful anymore. While it can point out many of a site’s spammy or “toxic” backlinks, we really do believe Google when they say they can ignore those links. Disavowing spammy backlinks will not hurt a site, but it is unlikely to help.

For several years we found it helpful to use the tool to remove the spammy links from our link spreadsheets so that we can audit what remains. We still do this internally. But we do not want people to use this tool, or any other tool, including Semrush’s toxic link tool to determine which links to disavow.

If you are a current subscriber of the MHC disavow blacklist, you should have been contacted earlier this month regarding your subscription. Thank you for your support.

If you have questions about your link profile, your link building strategies or anything else related to link quality, click here to book a link overview or time with an MHC link auditor.

Comments are closed.